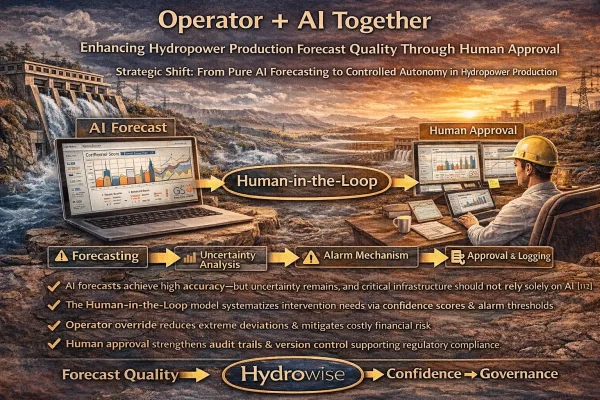

Operator + AI Together: Enhancing Hydropower Production Forecast Quality Through Human Approval (Human-in-the-Loop)

Strategic Shift: From Pure AI Forecasting to Controlled Autonomy in Hydropower Production

Hydropower production forecasting is no longer merely a question of “Can the AI generate an accurate prediction?”

At the enterprise level, the real questions are far more structural:

- Under what conditions should a model be considered reliable?

- Which signals require human intervention?

- Who ultimately owns the final decision?

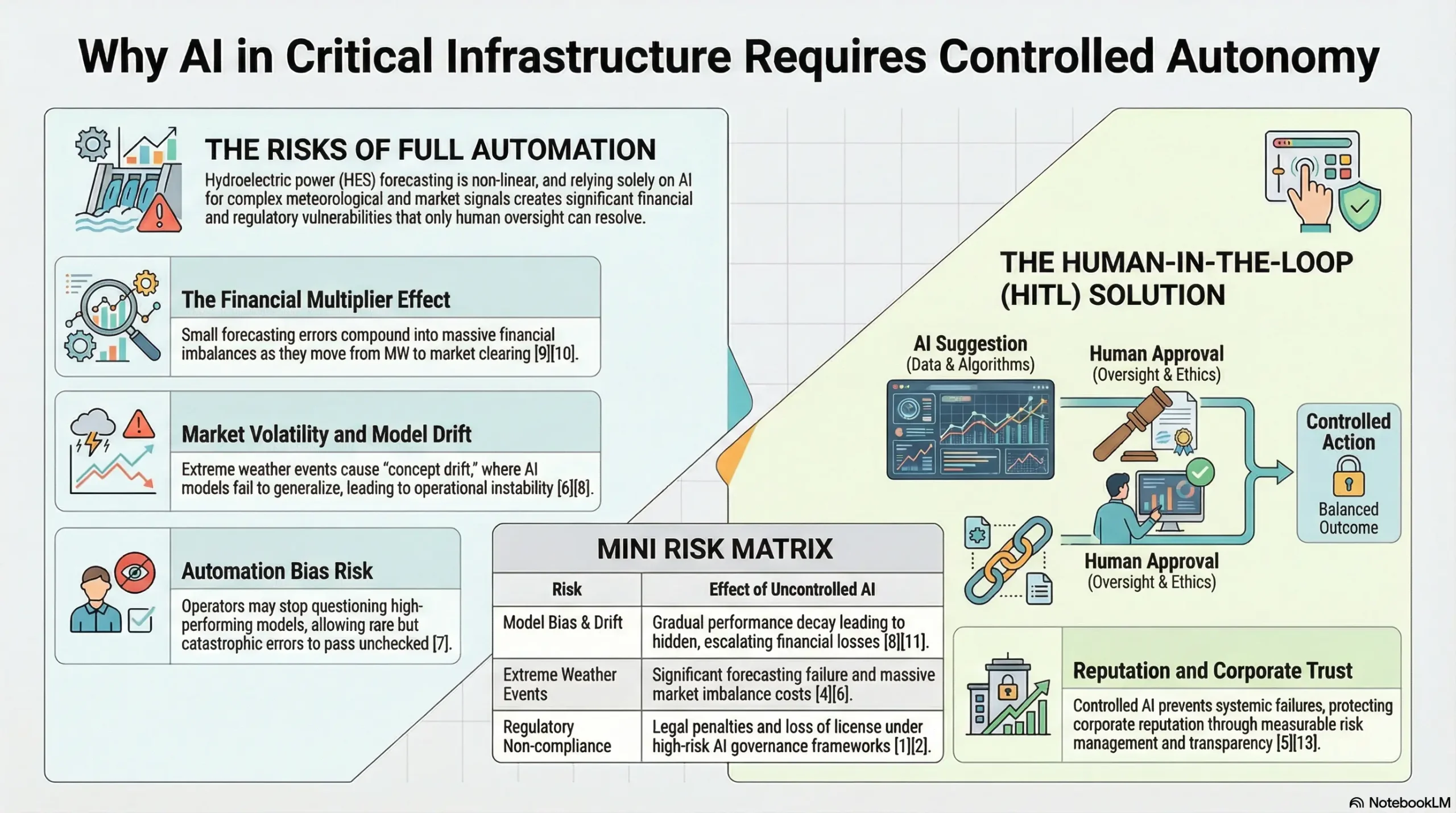

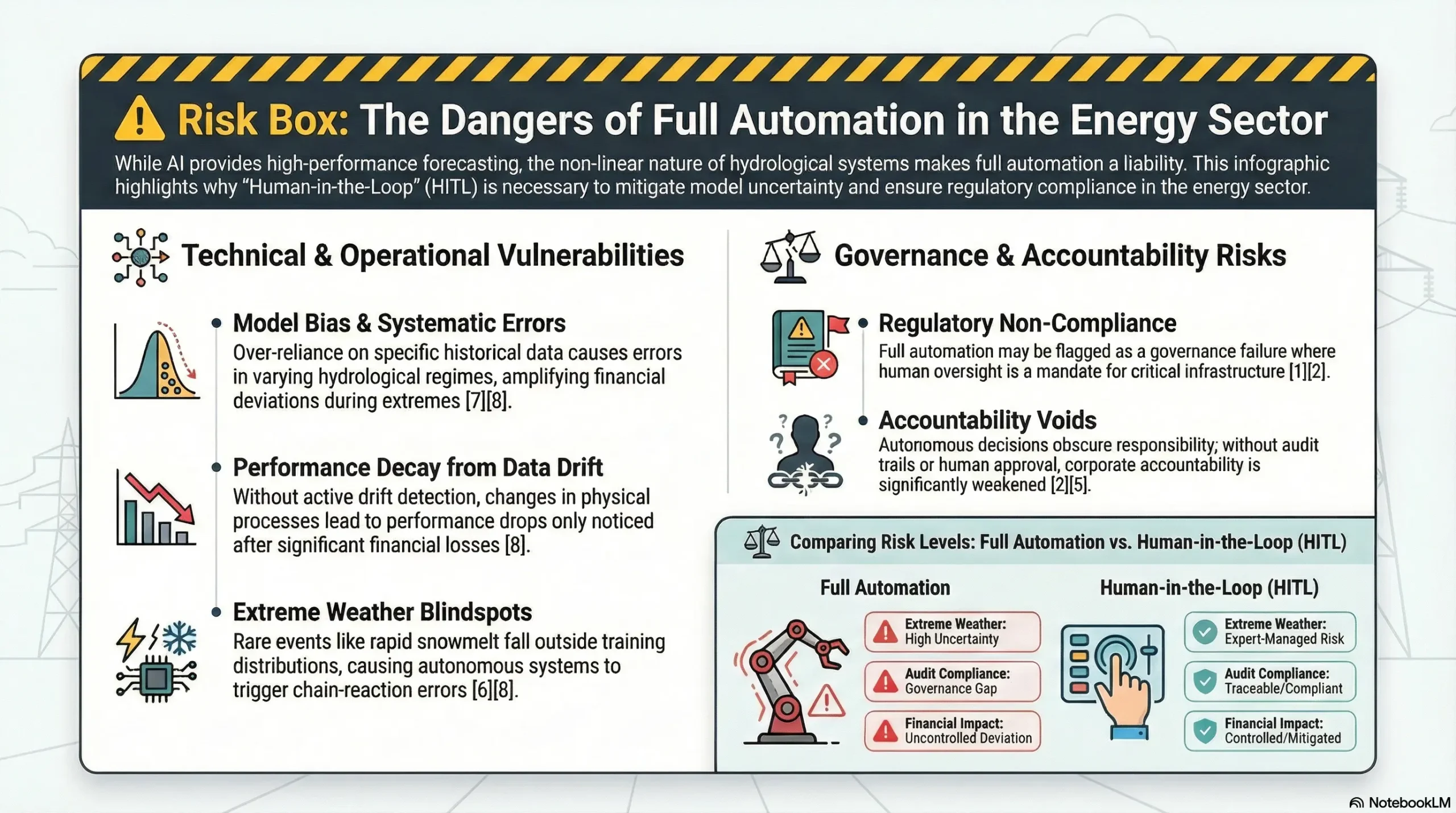

Hydroelectric generation is shaped by a multi-layered interaction of meteorological uncertainty, basin hydrodynamics, reservoir levels, turbine performance curves, and electricity market price signals. In such a dynamic and non-linear system, accuracy metrics alone are not sufficient. Decision reliability, traceability, and the ability to intervene when necessary are just as critical as predictive precision.

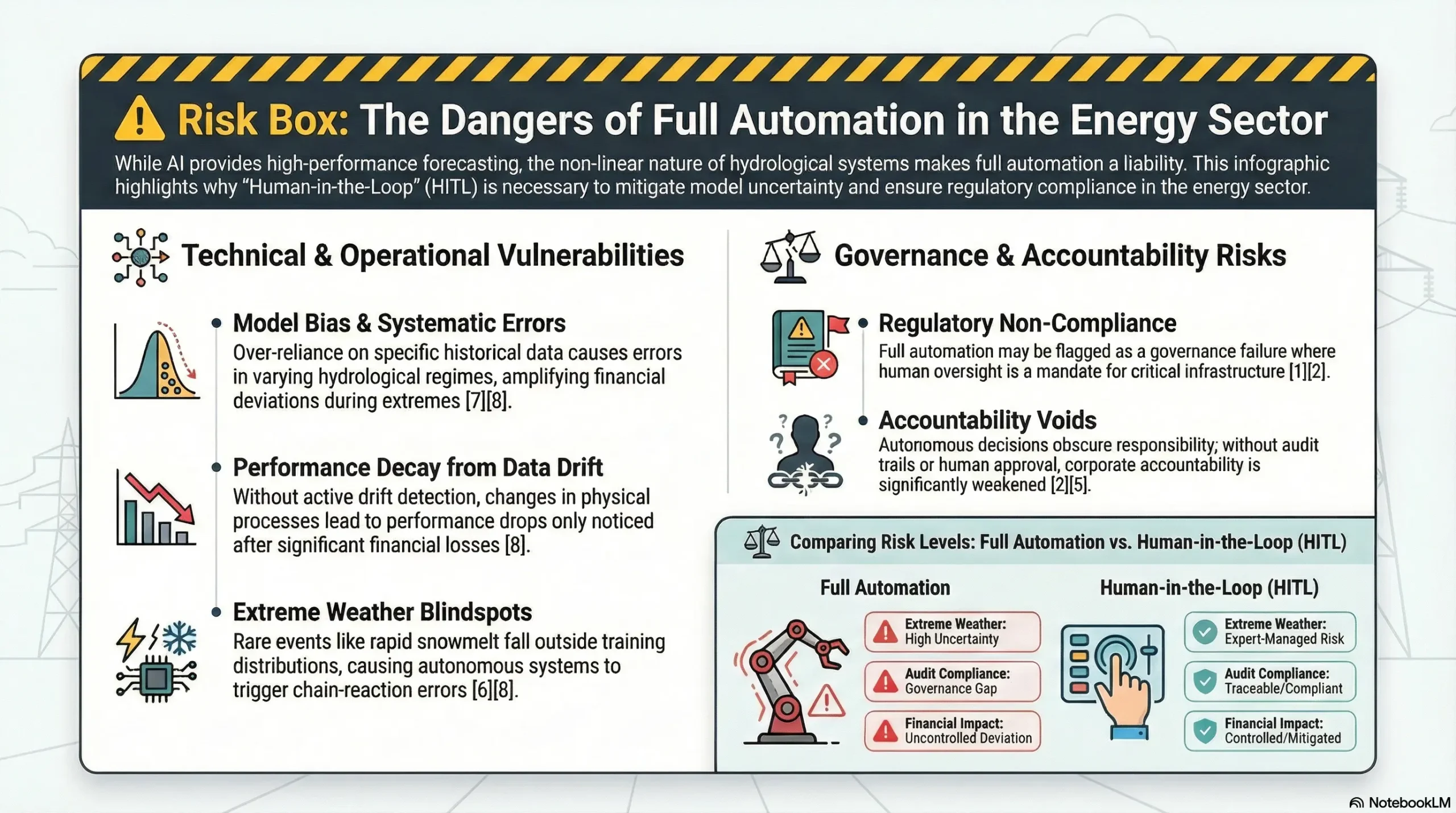

AI systems can achieve strong performance when trained on historical data. However, hydrological systems are inherently non-deterministic. Extreme weather events, abrupt temperature shifts, rain-on-snow scenarios, or degradation in data quality may push the model outside its training distribution. In these situations, model uncertainty increases significantly.

If the system operates in a fully automated mode, this uncertainty propagates directly into operational planning—and indirectly into financial outcomes.

In critical infrastructure environments, full automation represents not only a technical risk but also a governance risk. The EU AI Act explicitly mandates human oversight in high-risk systems [1]. Likewise, the NIST AI Risk Management Framework recommends that AI-driven decisions remain measurable, traceable, and embedded within a clear accountability structure [2].

For the energy sector, the sustainable paradigm is clear:

AI proposes. Humans approve.

The Human-in-the-Loop (HITL) model transforms AI forecasts into structured decision-support outputs, with final approval resting on human expertise. This approach does not weaken automation—it institutionalizes control. As a result, the forecasting system becomes not only more accurate, but also more reliable, auditable, and enterprise-ready.

- AI forecasts can achieve high accuracy; however, uncertainty remains, and critical infrastructure should not rely on fully autonomous decision-making mechanisms [1][2].

- The Human-in-the-Loop model systematizes intervention requirements through confidence scores and alarm thresholds.

- A structured operator override mechanism reduces extreme deviations and mitigates financial risk exposure.

- Human approval strengthens regulatory compliance by enabling audit trails and version control.

- The Hydrowise approach embeds AI–operator interaction within an enterprise SaaS architecture, transforming predictive intelligence into measurable quality improvement.

Conceptual Framework and Core Definitions

1. What Is Human-in-the-Loop (HITL)?

Human-in-the-Loop (HITL) is a controlled autonomy architecture in which AI systems remain subject to human oversight prior to final decision execution. This approach does not disable automation; rather, it balances the speed and analytical capacity of AI with human expertise—context interpretation, risk intuition, and awareness of operational constraints.

At the enterprise level, HITL is not merely a philosophical stance; it is a structured control procedure. It answers critical governance questions:

- Who approves the forecast?

- At which thresholds does approval become mandatory?

- Which conditions require escalation?

- Which decisions are logged?

- Which KPIs are used for monitoring performance?

Three core principles define the HITL model:

- Controlled Autonomy: AI generates outputs automatically; human operators intervene when necessary.

- Transparency: Uncertainty ranges and confidence scores are visible and interpretable.

- Accountability Chain: The final decision is approved by a human authority.

In enterprise practice, these principles translate into a structured workflow:

Forecast generation → Risk assessment → Approval → Logging → Performance monitoring.

In this sense, HITL does not mean “a human is looking at the model.” It represents a rule-based, auditable, and scalable decision architecture [2][14].

2. Model Uncertainty and Energy Systems

Uncertainty in hydropower production forecasting is unavoidable. It consists of two primary components:

- Aleatoric uncertainty: Inherent randomness in natural systems (variability in weather and hydrological processes).

- Epistemic uncertainty: Error potential arising from limited model knowledge (data scarcity, insufficient representation capacity) [3][6].

Epistemic uncertainty tends to increase under extreme conditions. For example, rapid snowmelt triggered by sudden temperature increases may represent a regime the model has only partially observed historically.

Similarly, changes in basin conditions—such as soil moisture, snow water equivalent (SWE), or baseflow—can cause identical precipitation levels to produce different discharge responses. This elevates concept drift risk, where the functional relationship between input and output shifts over time [6][8].

3. Confidence Score and Alarm Threshold Design

A confidence score is typically derived from:

- Ensemble variance

- Probabilistic prediction interval width

- Model distribution deviation or out-of-distribution indicators

[4][11]

In enterprise systems, displaying a confidence score is not sufficient. The critical question is: what action does this score trigger?

For this reason, alarm threshold design forms the core of institutional control.

An example threshold framework:

- 85%+ → Automatic approval / fast track: The forecast can be used directly within routine operations.

- 70–85% → Operator review required: A short validation and contextual comment are added before execution.

- Below 70% → Mandatory manual validation: The forecast is treated as operationally or financially critical; revision or escalation is required.

Threshold determination may vary across plant types. Regulated reservoirs, run-of-river plants, and pumped-storage systems each have different risk tolerances and intervention costs.

Therefore, instead of a single universal threshold, parametric threshold sets aligned with plant profiles are more sustainable and governance-aligned [2][9].

4. AI Governance and Critical Infrastructure

The EU AI Act defines requirements for high-risk systems, including human oversight, transparency, traceability, and structured risk management [1].

The NIST AI Risk Management Framework introduces a “govern–map–measure–manage” structure that integrates AI risks into enterprise processes [2].

In the context of hydropower production forecasting, these frameworks raise fundamental operational questions:

- Who owns the forecasting decision?

- On which datasets was the model trained, and under what conditions is it considered reliable?

- How is model performance monitored, and how is drift managed?

- How are human override decisions logged and fed back into the system?

Human-in-the-Loop provides operational answers to these questions. Human approval is not merely a safety layer—it is a governance and audit layer embedded directly into the forecasting workflow.

Why Human-in-the-Loop Is a Strategic Necessity

AI systems generate forecasts based on historical data. However, hydrological systems are inherently non-linear, and extreme weather events may push the model outside its training distribution [6].

For this reason, Human-in-the-Loop (HITL) is not merely an additional safety layer—it is a quality strategy. It ensures that human expertise is activated precisely when the cost of error is highest.

1. Automation Bias

Operators may place unquestioned trust in models that have historically demonstrated strong performance [7]. This phenomenon is particularly common in organizations where “the model is usually right.” Decision speed pressure and operational workload may incentivize treating model outputs as the default truth.

The critical risk of automation bias is this:

If the model fails in a rare scenario, the decision may be executed without meaningful human scrutiny.

HITL mitigates this behavioral risk by embedding intervention into procedure. Rather than relying on discretionary review, the system enforces structured triggers:

Low confidence score → Mandatory review.

By converting oversight into policy, HITL reduces dependence on individual vigilance and strengthens institutional resilience.

2. Data Drift and Concept Drift

Over time, data distributions may shift (data drift), or the underlying physical relationships may change (concept drift) [8].

Examples include:

- Sensor calibration degradation

- Outdated rating curves

- Structural changes in basin conditions

- Long-term climate variability

- Operational rule modifications

In such cases, model inputs may gradually shift without immediate visibility. If drift is not detected, model performance declines incrementally. Organizations often recognize the deterioration only after financial outcomes worsen.

Therefore, HITL alone is not sufficient. HITL must operate alongside:

Monitoring systems

Alarm mechanisms

Version control and model governance

[8][11]

Together, these elements create a continuous control loop rather than a reactive correction mechanism.

3. The Financial Multiplier Effect: Why Errors Become “Expensive”

A small deviation in hydropower production forecasting can cascade through the value chain:

Discharge → Production → Day-Ahead Market Bid → Realization → Imbalance Settlement → Financial Reconciliation

[9][10]

The critical insight is that error does not grow only in megawatts—it grows in financial impact.

Under high price volatility, the same MW deviation may produce disproportionately larger monetary consequences. Even if average error metrics appear acceptable, rare extreme deviations during volatile market hours may represent the true institutional risk exposure [4][9].

HITL is particularly valuable in these “low probability, high impact” situations. By introducing structured human review when uncertainty increases, it reduces tail-risk exposure and improves the overall risk profile of the forecasting system.

Operational Workflow – AI + Operator Interaction

The operational workflow consists of five core layers:

- Forecasting Layer:

The AI model generates discharge and production forecasts. - Uncertainty Analysis:

The confidence score and prediction interval are calculated and made visible. - Alarm Mechanism:

If the confidence score falls below a predefined threshold, manual validation becomes mandatory. - Operator Approval:

The forecast is either approved as-is or overridden based on contextual expertise. - Logging and Version Control:

Every action is stored as part of an auditable trail, including revisions, approvals, and timestamps.

At the enterprise level, two additional dimensions must be embedded into this workflow:

- Role-Based Access Control (RBAC):

Who is authorized to approve? Who can revise? Who holds final decision authority? - Escalation and SLA Framework:

If a decision remains unresolved below a certain threshold or within a defined time horizon, to which unit is it escalated? What response time commitments apply?

Without these two dimensions, HITL remains at the level of “a human is reviewing.”

Enterprise-grade HITL transforms human approval into a structured governance procedure [2][14].

🔎 Technical Note

Human Override Process:

- AI generates the forecast

- Confidence score is calculated

- Alarm threshold is checked

- Operator performs revision (if required)

- Version record is stored

Source: [2][14]

This architecture safeguards not only forecast quality but also institutional accountability. During audits or post-event reviews, the system provides a clear answer to the question:

“Why was this decision made?”

Because the rationale, approval path, override history, and performance context are all embedded within the system, traceable and verifiable.

Impact from a Hydropower Plant Operations Perspective

In energy facilities, this mechanism produces direct financial outcomes—but its impact is not purely financial. The operational implications of Human-in-the-Loop (HITL) can be assessed across three dimensions:

- Operational Stability:

The pressure of unexpected intra-shift corrections decreases, enabling more predictable operational control. - Reservoir Strategy:

Water management becomes more consistent, preserving flexibility for forward-day planning. - Market Reliability:

Bid quality improves, and imbalance risk becomes more predictable and manageable [9][10].

Consider the following example:

- AI forecast: 520 m³/s

- Actual discharge: 480 m³/s

- Deviation: 40 m³/s

This deviation may trigger a cascade of consequences:

- Incorrect Day-Ahead Market bid

- Imbalance settlement costs

- Disruption of reservoir management strategy

- Increased collateral requirements

[9]

From an operations standpoint, unexpected deviations can also affect turbine operating points and efficiency bands. This may result in secondary losses, such as generating less energy with the same volume of water [15].

For this reason, HITL is not merely about “correcting the forecast.” It represents an enterprise-level improvement in operational quality and risk control.

An additional benefit of HITL is its contribution to building a learning organization. Operator override decisions serve as valuable labels that reveal under which conditions the model struggles.

If properly logged and structured, these labels accelerate the model improvement cycle—for example:

- Expanding extreme event datasets

- Updating feature engineering

- Strengthening sensor data quality controls

[8][11]

In this sense, HITL not only reduces risk—it enhances institutional intelligence over time.

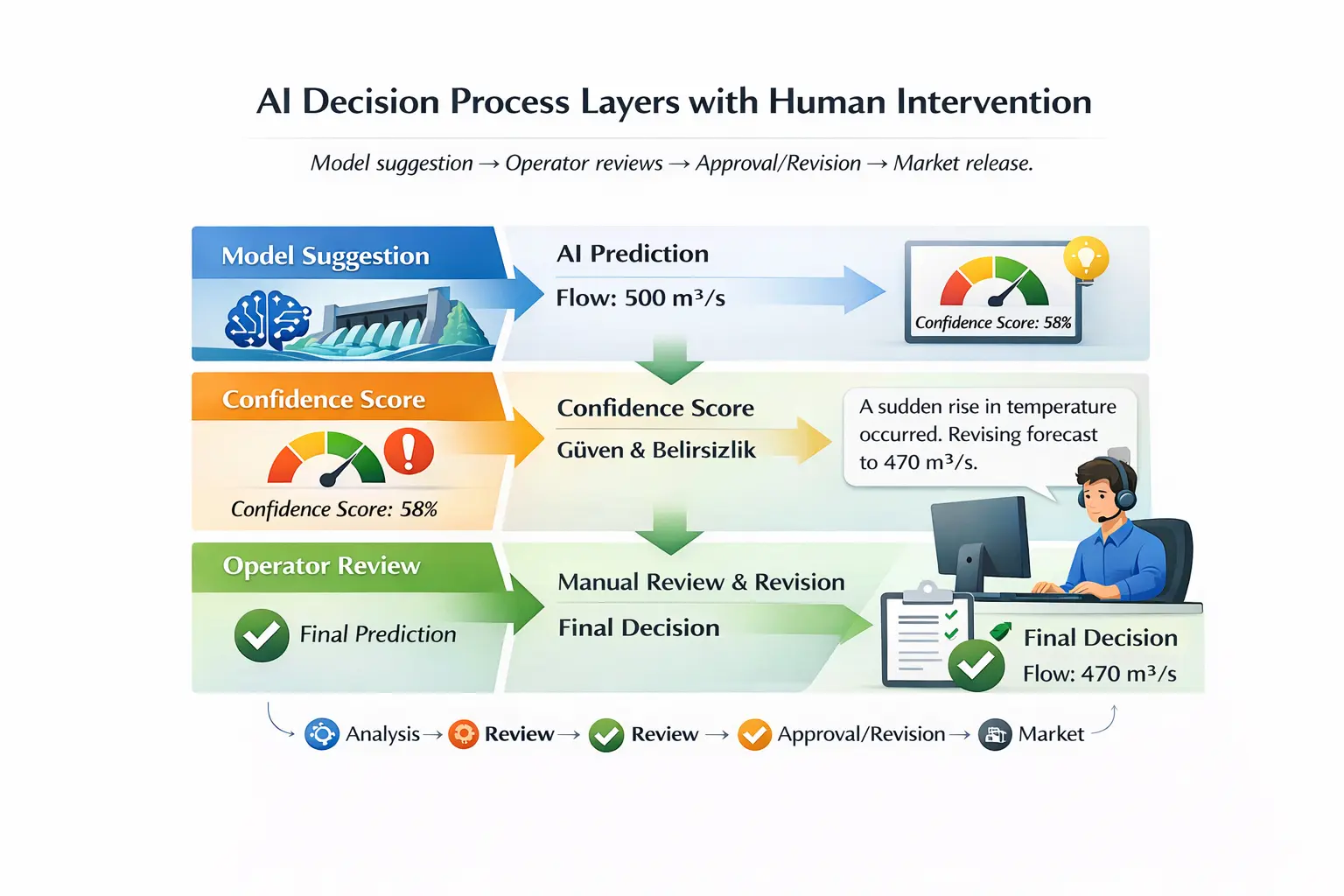

Example Scenario: Risk Mitigation Through Intervention

Scenario:

- AI forecast: 500 m³/s

- Confidence score: 58%

- Alarm threshold: 70%

The operator identifies a sudden temperature increase in the meteorological model and revises the forecast to 470 m³/s.

Operational Workflow – AI + Operator Interaction

This intervention may prevent significant financial losses. However, from an enterprise governance perspective, the greater value lies elsewhere:

The revision is logged in the system with a structured reason label—for example:

“rain-on-snow risk,” “temperature anomaly,” or “suspected sensor drift.”

This creates both an audit trail and a structured feedback signal. The model development team can later analyze under which conditions human intervention occurred and quantify these patterns across time.

In this way, intervention is not treated as an exception—it becomes measurable institutional intelligence.

Example Interface: What Should a Decision-Support Screen Display?

In an enterprise-grade HITL environment, simply displaying a forecast is insufficient.

The operator must be presented with a structured decision context.

A robust Human-in-the-Loop interface should include:

- Hourly discharge and production forecast

- Confidence score and uncertainty interval (±)

- Alarm trigger explanation (e.g., “low confidence score,” “drift signal detected”)

- Error distribution over the past 7/30 days (MAPE / RMSE trend) [4]

- Data quality indicators (missing data, latency, sensor outliers)

- Operator comment field and revision reason label

- Approval status (draft / approved / revised) with timestamp

- Approver identity (role-based) and version number

This interface design elevates HITL beyond “a human is reviewing” to “a human-controlled decision architecture” [2][14].

The Hydrowise / Renewasoft Approach

The Hydrowise Forecast module structures forecasting within a three-layer control architecture:

- Hybrid Modeling:

Integration of physical hydrological models with AI-based predictive models. - Risk Scoring:

Confidence score calculation and structured uncertainty analysis. - Human Approval Workflow:

Operator validation, override capability, and full version tracking.

This architecture:

- Generates alarms when drift signals are detected

- Enforces manual review when confidence scores fall below threshold

- Logs override actions as structured decision records

- Supports regulatory compliance requirements

- Monitors performance not only through “average error,” but through tail deviations and high-risk hours [4][9][11]

From an enterprise perspective, Hydrowise’s key differentiator is that it transforms forecasting from a standalone “model output” into an integrated governance workflow.

Forecasts become shared decisions across operations and trading units:

Approved forecast → Measurable risk → Traceable process.

Hydrowise also aims to protect operators from unnecessary manual workload. The objective is not to introduce hourly friction but to activate human intervention only under risk-relevant conditions.

Alarm threshold and confidence score design provide this balance.

The outcome is institutional equilibrium:

- Speed and control.

- Automation and accountability.

- Accuracy and governance.

Frequently Asked Questions

- Is Human-in-the-Loop mandatory?

In critical infrastructure environments, it is strongly recommended. The EU AI Act’s emphasis on human oversight supports this governance model [1]. - Can AI operate without a confidence score?

Sustainable risk management is not possible without measuring uncertainty. The confidence score is the primary trigger signal within HITL architecture [4][11]. - Does operator intervention weaken the model?

When properly designed, the opposite occurs. Extreme errors decrease, and the model improvement cycle accelerates—override labels become structured learning data [8][11]. - How is drift detected?

Through a combination of statistical distribution analysis, data quality monitoring, and performance trend tracking [8][11]. - Does this approach deliver financial benefits?

Yes. Reducing extreme deviations can lower imbalance settlement costs and collateral pressure in volatile market conditions [9][10]. - Doesn’t human approval introduce “human error” risk?

In enterprise HITL architecture, this risk is mitigated through role-based authorization, second-level approval mechanisms, and structured audit trails [2][14].

Forecast Quality = Accuracy + Confidence + Governance

Hydropower production forecasting is no longer defined solely by mathematical accuracy.

Quality is now measured by reliability, traceability, and structured human oversight.

The Human-in-the-Loop approach:

- Reduces extreme forecast errors

- Lowers financial risk exposure

- Strengthens regulatory compliance

- Enhances institutional trust

- Establishes a shared decision framework between operations and trading units [1][2][9]

Hydrowise delivers this architecture within a scalable SaaS environment.

If your forecasting system does not incorporate a confidence score, alarm thresholds, and a structured human approval workflow, risk remains invisible.

To make your hydropower forecasting safer, more controlled, and enterprise-ready, request a Hydrowise demo.