How Can Energy Production Forecasting Systems Remain Reliable in Live Environments?

The Model Appears to Be Working. But Is It Truly Reliable?

A production forecasting model deployed in a hydropower plant may initially demonstrate high accuracy. Achieving 90% accuracy on training data, low MAE, and stable performance charts can satisfy technical teams. However, three months later, the same model’s performance may gradually decline. The increase in error is not dramatic; therefore, no alert is triggered. The dashboard continues to operate. Reports are generated. Yet the model no longer accurately represents the physical system.

In the energy sector, forecast error is not merely a statistical deviation. The Day-Ahead Market operates on an hourly bid-matching mechanism [1]. Deviations in hourly production forecasts directly translate into balancing costs and revenue loss. Errors during peak hours generate disproportionately high financial impact. Therefore, model accuracy is not only a technical KPI but also a corporate risk indicator.

Machine learning models are mathematically static; however, the physical systems they represent are dynamic and evolving. For this reason, MLOps (Machine Learning Operations) forms the foundation of sustainable reliability in energy forecasting systems [2].

- Drift in energy forecasting systems is inevitable.

- Data drift and concept drift represent different risk categories [3].

- Average error metrics alone are insufficient.

- Silent model degradation may result in multi-million financial losses.

- Corporate MLOps requires data health monitoring, drift analysis, financial impact modeling, version control, and human approval.

Concepts and Background: Drift, Stationarity, and Energy Systems

Energy production forecasting systems are typically trained on historical data. This approach assumes statistical stationarity. However, real-world hydrometeorological processes are inherently non-stationary.

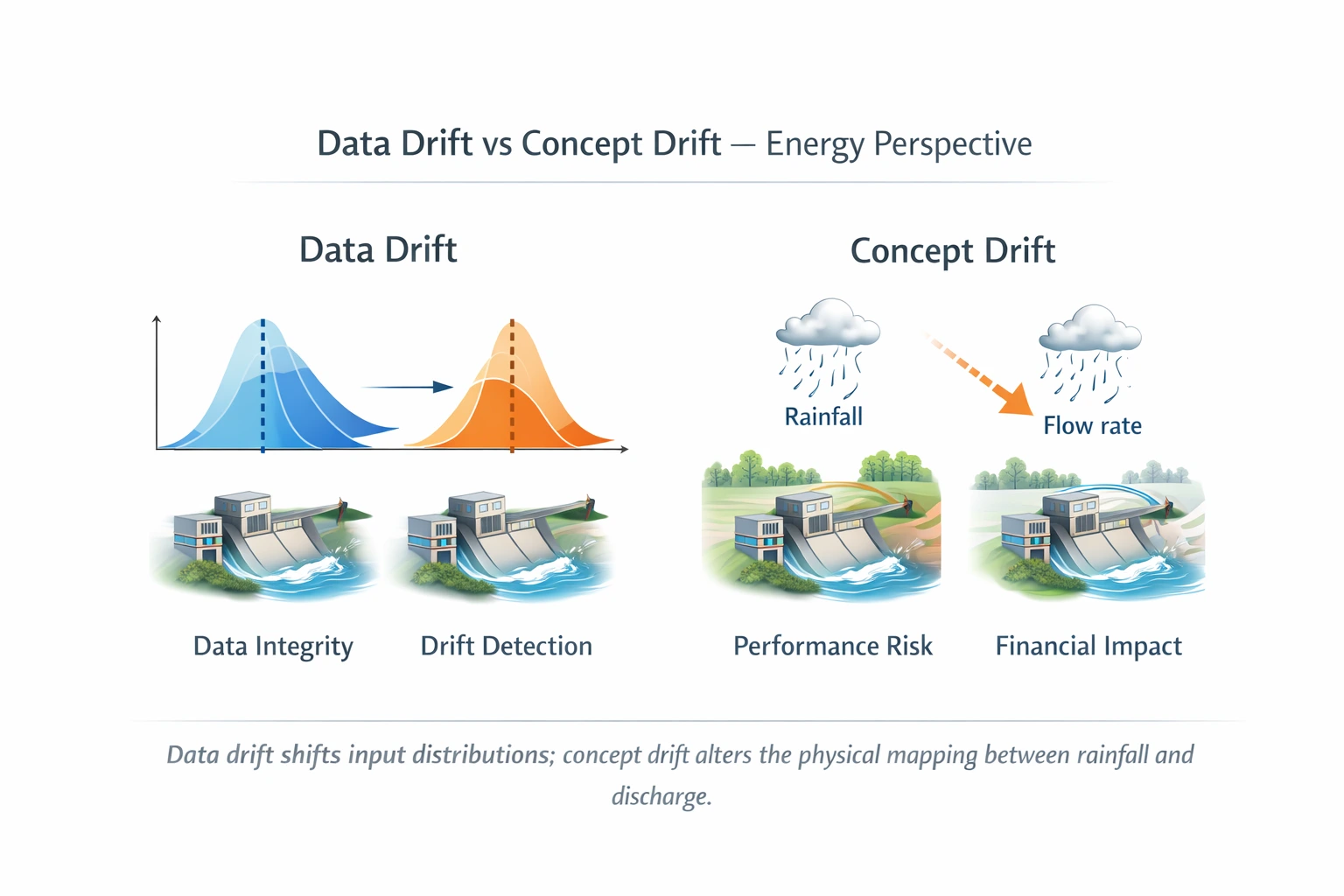

Model drift can be examined under two primary categories: data drift and concept drift.

1. Data Drift

Data drift refers to changes in the statistical distribution of input variables. For example, an increase in the frequency of extreme precipitation events [4] may cause deviation from the distribution observed during training. In such cases, the model continues to assume historical distribution characteristics.

2. Concept Drift

Concept drift is more profound. It occurs when the relationship between inputs and outputs changes over time [3]. The same rainfall amount may no longer produce the same discharge. Possible causes include:

- Changes in soil saturation structure

- Sediment accumulation

- Increased channel roughness

- Basin land-use transformation

Concept drift represents a loss of physical representativeness.

3. Mathematical Interpretation of Concept Drift

A machine learning model learns the relationship:

P(Y | X)

Under concept drift conditions, the conditional probability distribution changes over time:

Pt(Y | X) ≠ Pt+1(Y | X)

This situation may require not only retraining but also re-evaluation of the model architecture [3].

🔎 TECHNICAL NOTE

The Illusion of Statistical Stationarity in Energy Forecasting Systems

- According to the IPCC, the frequency of extreme weather events is increasing [4].

- Climate variability introduces long-term structural shifts.

- Energy infrastructure degrades over time, leading to performance loss.

Therefore, statistical stationarity assumptions are unreliable in long-term energy forecasting.

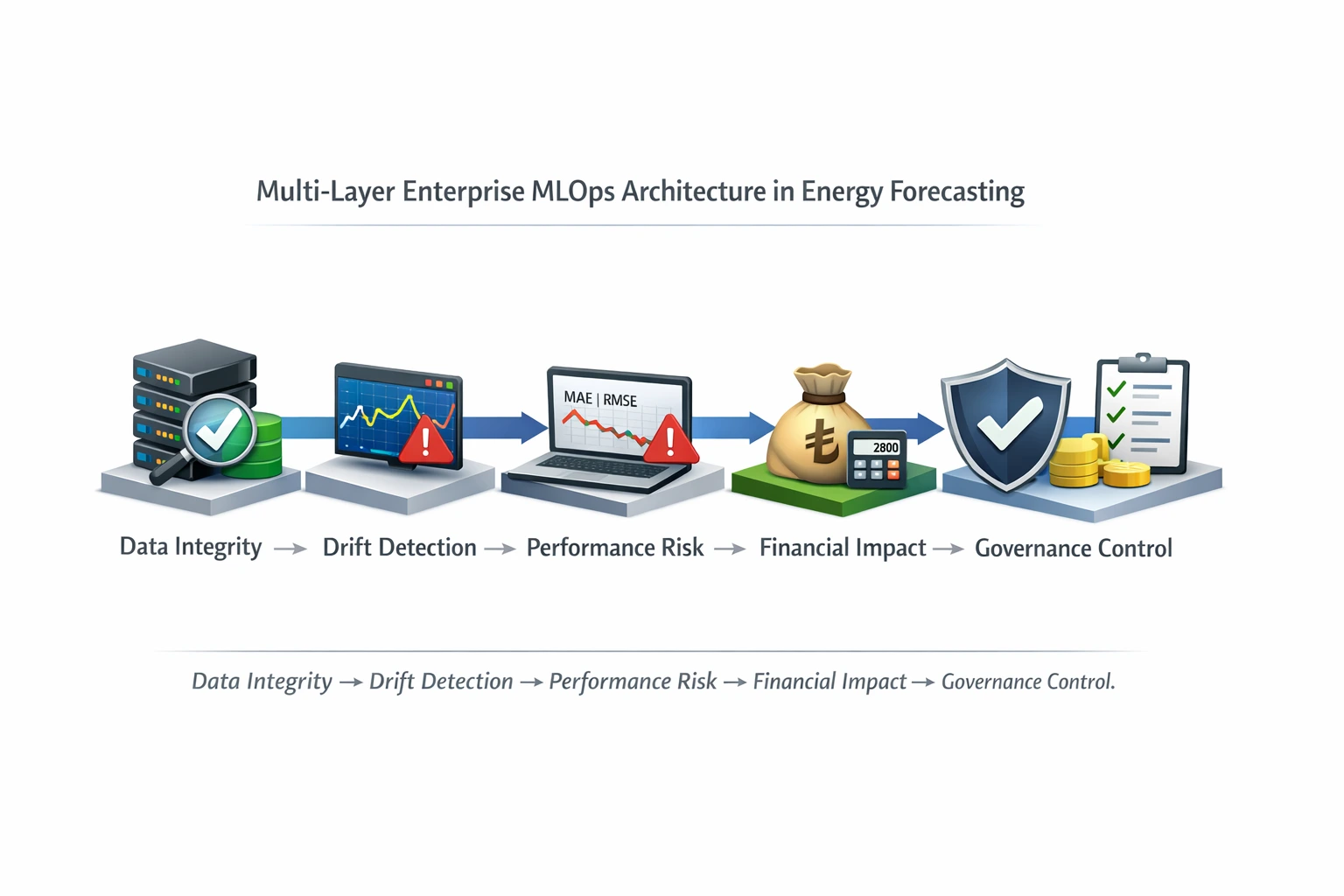

How It Works — Energy-Specific MLOps Architecture

A corporate MLOps architecture in the energy sector should consist of four primary layers: data health, distribution analysis, performance monitoring, and financial impact assessment.

1. Data Health Layer

This layer monitors:

- SCADA sensor anomalies

- Missing data ratios

- Timestamp synchronization issues

The NIST AI Risk Management Framework emphasizes data quality as a core element of AI risk management [5].

2. Distribution Analysis Layer

Feature drift is detected using methods such as:

- Population Stability Index (PSI)

- Kolmogorov–Smirnov test

- Adaptive Windowing algorithms [6]

A PSI value above 0.25 indicates significant distribution shift.

📌 Info Card

PSI Interpretation Range:

| 0.00–0.10 → Stable |

| 0.10–0.25 → Moderate change |

| 0.25 → Critical drift |

Source: [6]

3. Performance Metrics Layer

Traditional metrics such as MAE, RMSE, and MAPE are monitored [7]. However, in energy production forecasting, additional metrics must be evaluated:

- Peak Error (%)

- Lag Error (hour-based timing shift)

In hourly bidding systems, timing misalignment creates financial risk [1].

Figure 1

Figure 14. Financial Impact Layer

The financial impact layer simulates the revenue consequences of forecast error. This transforms model accuracy from a purely technical metric into a corporate risk indicator.

Example:

Forecast deviation: 8%

Peak price: 2800 TL/MWh

Deviation duration: 3 hours

The financial impact may be more significant than the statistical error magnitude. Without this layer, MLOps remains technical monitoring only.

Impact on Hydropower Plants

In a hydropower plant, discharge forecast error translates directly into production forecast error. Production forecast error directly affects Day-Ahead Market bidding strategy [1]. Due to hourly clearing mechanisms, errors during peak hours may grow disproportionately in financial terms.

For example, underestimating peak discharge by 20% may result in missed turbine optimization and potential revenue loss. Therefore, the forecasting system is not merely a technical component but also a financial reliability element.

The operational chain is as follows:

Discharge Forecast → Production Plan → Market Bid → Actual Generation → Balancing Cost

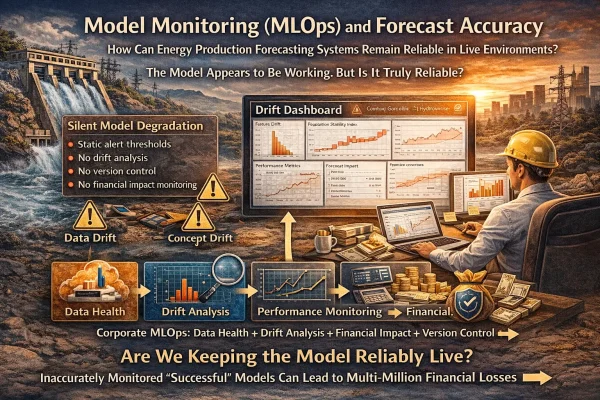

⚠️ Risk Card

Silent Model Degradation

- Static alert thresholds

- No drift analysis

- No version control

- No financial impact monitoring

Such a structure generates corporate risk.

Example Scenario / Mini Calculation

In a 65 MW hydropower plant over the last 120 days:

- MAE increased from 10% to 21%

- Peak error increased from 18% to 42%

- Lag error increased from 1 hour to 3 hours

Because the alert threshold was defined only as MAPE > 30%, no warning was triggered.

The operational outcome included underbidding during three major flow events and an estimated 2.2 million TL revenue loss. The model remained technically functional, yet the forecasting system was no longer revenue-secure. This illustrates the difference between operational status and financial safety.

Hydrowise / Renewasoft Approach

The Hydrowise forecasting system is designed with an energy-specific, multi-layer MLOps architecture. The system monitors not only model performance but also physical consistency and financial impact.

1. Multi-Layer Monitoring

- Data quality

- Drift analysis

- Peak error

- Financial impact

2. Drift Dashboard

Feature distribution shifts, performance trends, and revenue impact are visualized through a dedicated monitoring interface.

3. Human-in-the-Loop

Retraining recommendations are generated automatically; however, model versions are not changed without operator approval. Full automation is not recommended in critical infrastructure systems [8][9].

4. Governance and Versioning

Each model version is stored with:

- Training data range

- Feature set

- Drift report

- Approval timestamp

This structure aligns with EU AI Act and ISO AI risk governance frameworks [9][10]. Through this approach, the forecasting system becomes not only accurate but also corporately reliable.

Frequently Asked Questions

- How often should a model be retrained?

A hybrid approach based on drift detection and performance degradation is recommended. - Is MAPE sufficient?

No. Peak error and lag error must also be monitored. - Does drift always imply model failure?

No. Data quality must first be validated. - Is fully automated retraining safe?

In critical infrastructure systems, human oversight is recommended. - Why is AI governance important?

Regulatory frameworks such as the EU AI Act and ISO AI standards require monitoring and traceability [9][10].

Conclusion

An energy production forecasting system is not merely an analytical tool but an operational asset. Model accuracy is directly linked to financial stability and operational security. Drift is inevitable; an unmonitored model will gradually lose reliability.

The Hydrowise approach transforms forecasting systems into observable, measurable, and intervention-ready infrastructure.

Request a demo to explore the Hydrowise monitoring dashboard and conduct a drift analysis of your current forecasting system.